The Million-Dollar AI Mistake

Architecting Reliable Systems in the LLM Era

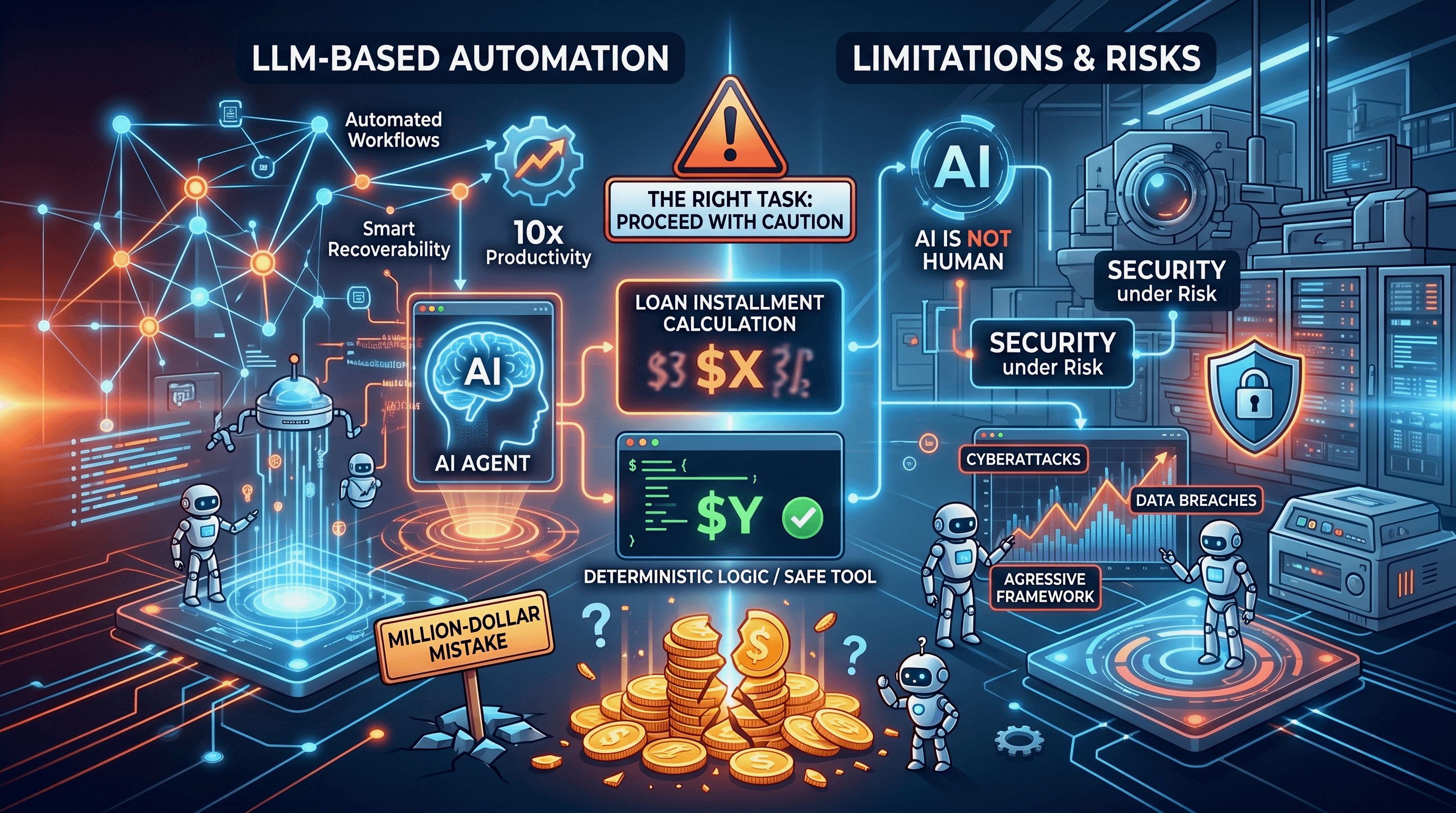

While designing a complex installment module with multiple variables, an engineer had a "bright" idea: "Instead of building this ourselves, let's just use AI. It can adjust the results on the fly." It was a million-dollar mistake.

LLMs have become a major focus today, with companies encouraging employees to utilize and deploy LLM-based solutions. The industry is moving in this direction for several key reasons:

Automated Workflows: We have always sought better automation. Generative AI provides outputs that far exceed the capabilities of traditional "if/else" logic.

Smart Recoverability: The right AI tools can act as "smart on-call robots," capable of identifying and resolving issues immediately.

10x Productivity: AI significantly reduces the time spent on repetitive tasks.

However, to achieve these benefits, we must first carefully understand AI's capabilities and its limitations. This is essential to avoid catastrophic surprises that could harm the business.

AI is not Human!

We often call these systems "agents," and that term can lead non-technical people to picture a human agent—someone waiting for orders with feelings and judgment. AI is not like that. Behind the scenes, it's a statistical/mathematical model that predicts the most likely next token (word) based on patterns learned from data. It has no feelings and makes no moral judgments.

Keeping this in mind pushes us to think twice before exposing an AI-based solution directly to the customer. We cannot afford to lose their trust. Ultimately, system reliability is far more critical than simply adding automation. Designing with this constraint ensures that our customers interact with a predictable, trustworthy platform rather than an unpredictable virtual hallucination.

Caution: Choosing the Right Task

Building an AI-driven system requires a clearly defined scope for what each AI component will handle. You can deploy a single agent or multiple agents working together, but if any agent is designed to perform tasks it shouldn't, the overall behavior can become unpredictable. Even with thorough testing, many issues only surface in production—often only detectable if you’ve already built a strong observability stack for the deployed agent system.

LLMs excel at understanding and generating text, but they are not engineered for reliable numerical computation or deterministic reasoning. The models work by matching patterns and estimating probabilities for the next token, supplemented by guardrails and rules that maintainers add to shape behavior. Because of this underlying mechanism, unexpected or incorrect outputs can appear even when the prompt seems straightforward.

For example, in the ChatGPT screenshot below you can see an unexpected result that probably wasn’t anticipated. But, if I executed the same logic with a Python interpreter, the answer would be 100% correct within a fraction of a second.

Imagine your AI assistant tells a customer your loan instalment is X*, but the real number is* Y*.* You need to understand this very well before you decide to deploy a LLM-based solution.

That's why agent-builder frameworks provide tool artifacts—dedicated interfaces that handle deterministic computations or external actions—and make them available to the model. Once you see this in practice, you'll appreciate how important a protocol like the Model Context Protocol (MCP) is for organizing inputs, outputs, and safe tool usage.

The Security Imperative

There are many resources on securing AI-based solutions, but I remain unconvinced by some of them. If your model has internet access, security must be a top priority. One report found 28 million AI-driven cyberattacks in 2025, 16% of which resulted in data breaches.

This reality should encourage every company to define an aggressive, clear framework. Teams must have strict policies and rules to follow when building and deploying AI-based solutions for specific tasks.

Conclusion

Generative AI is a tremendous engine for productivity, but it is not a silver bullet for every engineering challenge. As the "million-dollar mistake" illustrates, relying on probabilistic models for deterministic logic is a critical risk. To build truly reliable systems, we must define strict boundaries for our AI agents, leverage dedicated tool artifacts for computational tasks, and enforce aggressive security frameworks. Ultimately, maintaining customer trust means prioritizing system predictability over blind automation.